Over the last four years, generative Artificial Intelligence (Generative AI) has experienced an undeniable boom, both in the media and in practical applications. This widespread adoption was fueled by the publication of the article Attention Is All You Need by Google’s Research and Development department and the public introduction of a new neural network model: the Transformer.

The Transformer represented a revolution in the sector, becoming the foundation for the development of numerous large language models, including those from Google, OpenAI, and Microsoft. Chatbots, ChatGPT, Copilot, Gemini, and Llama are just some of the generative Artificial Intelligences that have become household names.

But what exactly is generative Artificial Intelligence and how does it work? To answer these and other questions, we once again interviewed Mario Avdullaj, a developer and Artificial Intelligence enthusiast at Stesi.

What is Generative AI

Generative Artificial Intelligence, also known as Gen AI, is a type of Artificial Intelligence capable of generating content (which can be text, images, video, or any other form of output) starting from inputs provided by a human operator, known as prompts. “These models,” Mario tells us, “learn the patterns and structure of the data they are trained on to generate new data with similar characteristics and patterns.”

This functionality makes Generative AI an extremely versatile technology, capable of being applied across various sectors. These range from software development to healthcare, from finance to entertainment, through to marketing and, naturally, logistics.

Interested in the topic? Read also “AI, machine learning and supply chain” here.

How Generative AI works

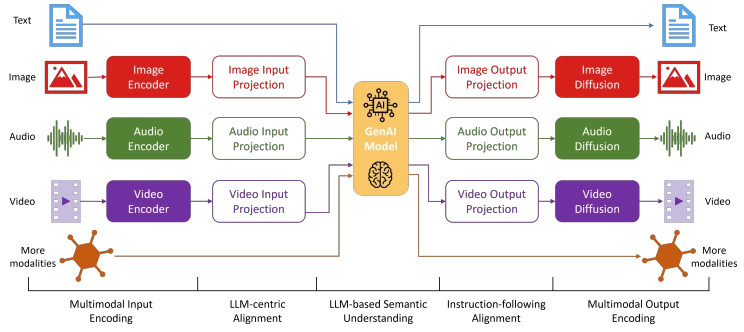

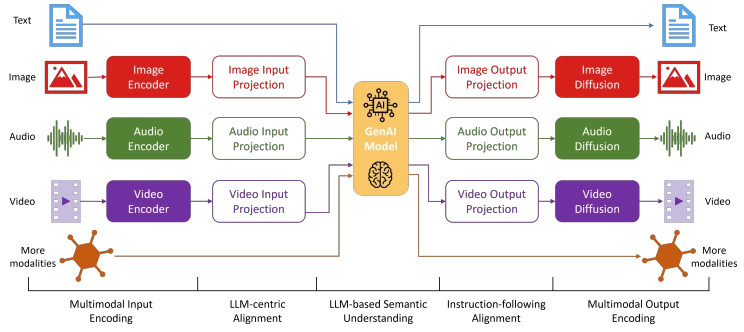

Generative AI is built by applying unsupervised or self-supervised learning techniques to a dataset. The capabilities of these systems depend directly on the type of data they were trained on and can be unimodal or multimodal.

- Unimodal: they accept only one type of input, such as text.

- Multimodal: they accept multiple types of input (text, images, audio, video, etc.) and their combinations.

“To truly understand what generative Artificial Intelligence is, however, we need to start with so-called foundation models,” says Mario, “which are classes of massive foundational models that can then be adapted to increasingly specific tasks through fine-tuning techniques.”

In short, foundation models are models trained on an enormous amount of data whose capabilities are largely generalized. Starting from these base models, it becomes possible to proceed with more specific tasks, specializing and training the model with more vertical data sets.

Large language models or LLMs

“One of the best-known categories of foundation models is certainly LLMs, or large language models, such as GPT,” Mario continues. This advanced type of Artificial Intelligence, specialized in natural language processing, is trained on a vast amount of text to allow this technology to understand and generate human language in a coherent and contextually relevant manner.

“And the fact that it is ‘contextually relevant’ must be carefully emphasized, because therein lies the biggest difference compared to previous generations of generative models,” Mario stresses. Key characteristics of LLMs include size and complexity. Large language models are equipped with billions of different parameters, making them particularly adept at grasping linguistic nuances and complex contexts.

The early days of Generative AI

But before ChatGPT, Copilot & Company, what was the state of the art in Gen AI?

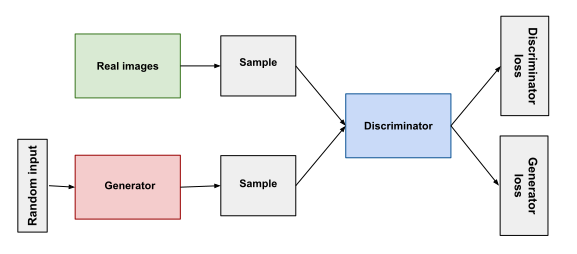

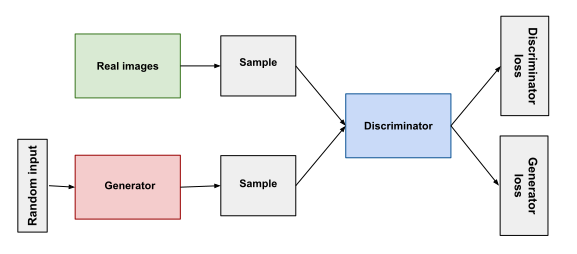

“Already 10 years ago, generative adversarial networks, known as GANs, were being created, which were based on two neural networks: a generator and a discriminator,” Mario explains.

- Generator: this is the neural network that tries to create new data, initially doing so on a random basis as it is set randomly. The generator updates the network’s weights based on a loss function provided as output by the discriminator.

- Discriminator: this is the neural network tasked with distinguishing which data produced by the generator is real (taken from the training set) and which is fake (produced by the generator). Its goal is to correctly classify samples as “real” or “false,” repeating this process cyclically until it can no longer distinguish between the two.

“Past neural networks were therefore already capable of generating content, but with the advent of Transformers, neural network models based on attention mechanisms to process sequences of data, there was a revolutionary shift,” Mario continues.

The Transformer revolution

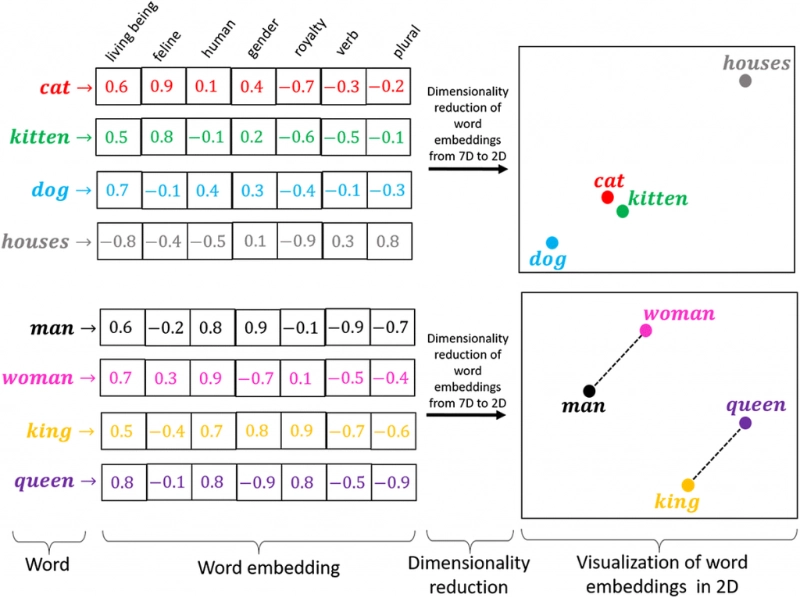

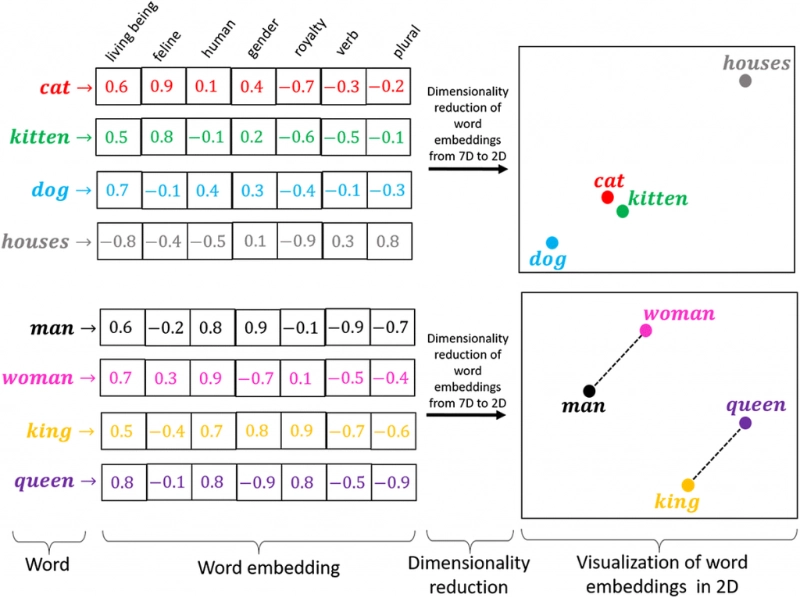

Starting from an initial prompt, Transformers convert text into numerical representations called tokens, which are in turn transformed into vectors through a word embedding table. “At every level of this neural network,” Mario explains, “each token, or word, is contextualized within a context window along with other tokens via an attention mechanism. Naturally, words with a similar context like ‘cat’ and ‘feline’ will have numerical vectors with a relatively lower distance compared to ‘cat’ and ‘dog’, which will have a greater distance.”

Each word is thus given a different relevance weight compared to others in the same sequence, allowing the AI to identify keywords within the text. “This attention mechanism allows the system to focus on the relevant parts of the input sequence given by the operator and generate an output based on the weight and importance of each word in relation to the context.”

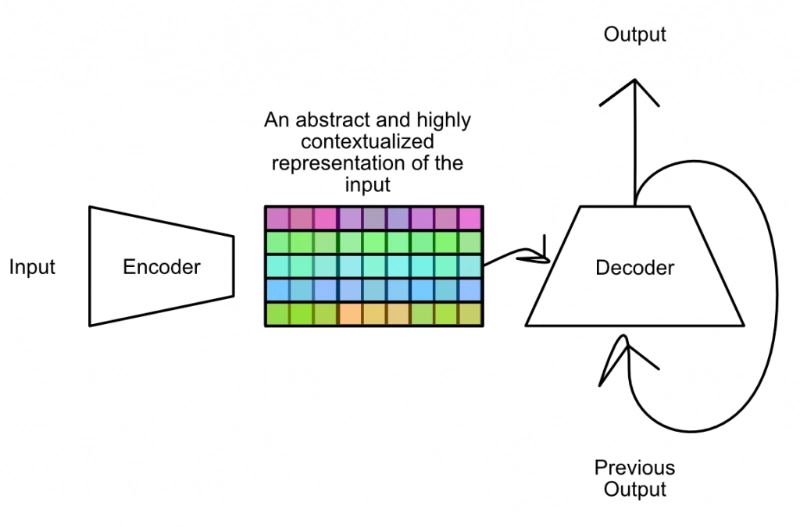

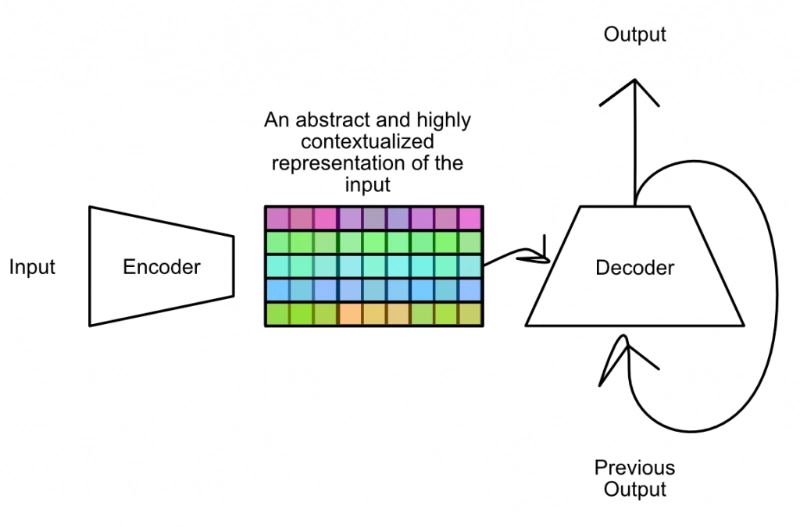

Transformers are composed of two layers:

- Encoder: transforms the input into an internal and abstract representation.

- Decoder: generates an output based on the representation created by the encoder.

But how do we get to the output generation? “In effect, the decoder generates one token at a time, based on previously generated ones and the attention information. The token with the highest probability is selected as part of the output and this process continues repeatedly until the end-of-sequence token is generated. In short, these networks do nothing more than predict the most probable next word at each step,” Mario explains.

Examples of Gen AI applications in logistics

Among the various sectors where Generative AI can find application, logistics is necessarily included. Gen AI can be used to automatically generate reports or to carry out analyses based on operational data.

“For example, GPT-4 trained on logistics data could generate detailed records on warehouse performance, analyzing key metrics such as travel times, stock levels, and error rates, while providing recommendations for implementing operational improvements. But there are many other application possibilities: we could use Generative AI for warehouse optimization, demand forecasting, better stock management, and waste reduction,” Mario explains.

Through the use of chatbots for operational assistance, for example, it becomes possible to assist warehouse operators by providing targeted answers to questions regarding operating procedures, product locations, or other requests, simply by connecting the generative model to the WMS database.

“We must also remember that Generative AI is increasingly moving towards multimodal logic, so operators could query it not only via text but also through images or even sounds, and the AI itself could respond by voice,” Mario continues.

Fine-tuning and RAG to verticalize Generative AI for the logistics world

Naturally, to become truly effective within the logistics context of a specific company, Generative AI must undergo a process of fine-tuning. “Fine-tuning is a process that involves additional training of an Artificial Intelligence model starting from a specific set of data, for example, taken from the Warehouse Management System,” Mario explains. Documentation data, statistical data, and corporate data can be used to train the AI and make it capable of answering any question related to procedures, business processes, inventory management, logistics operations, and so on.

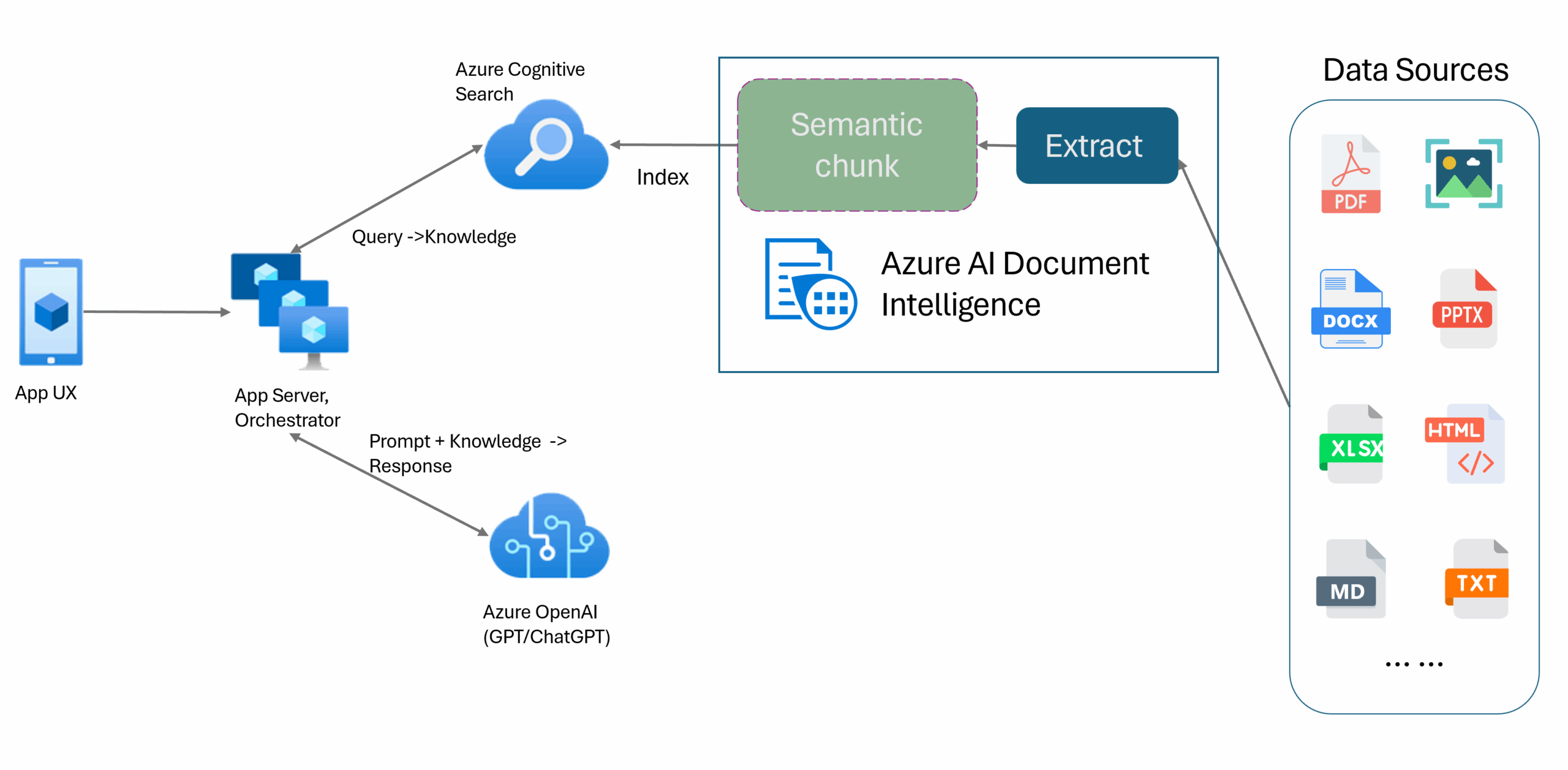

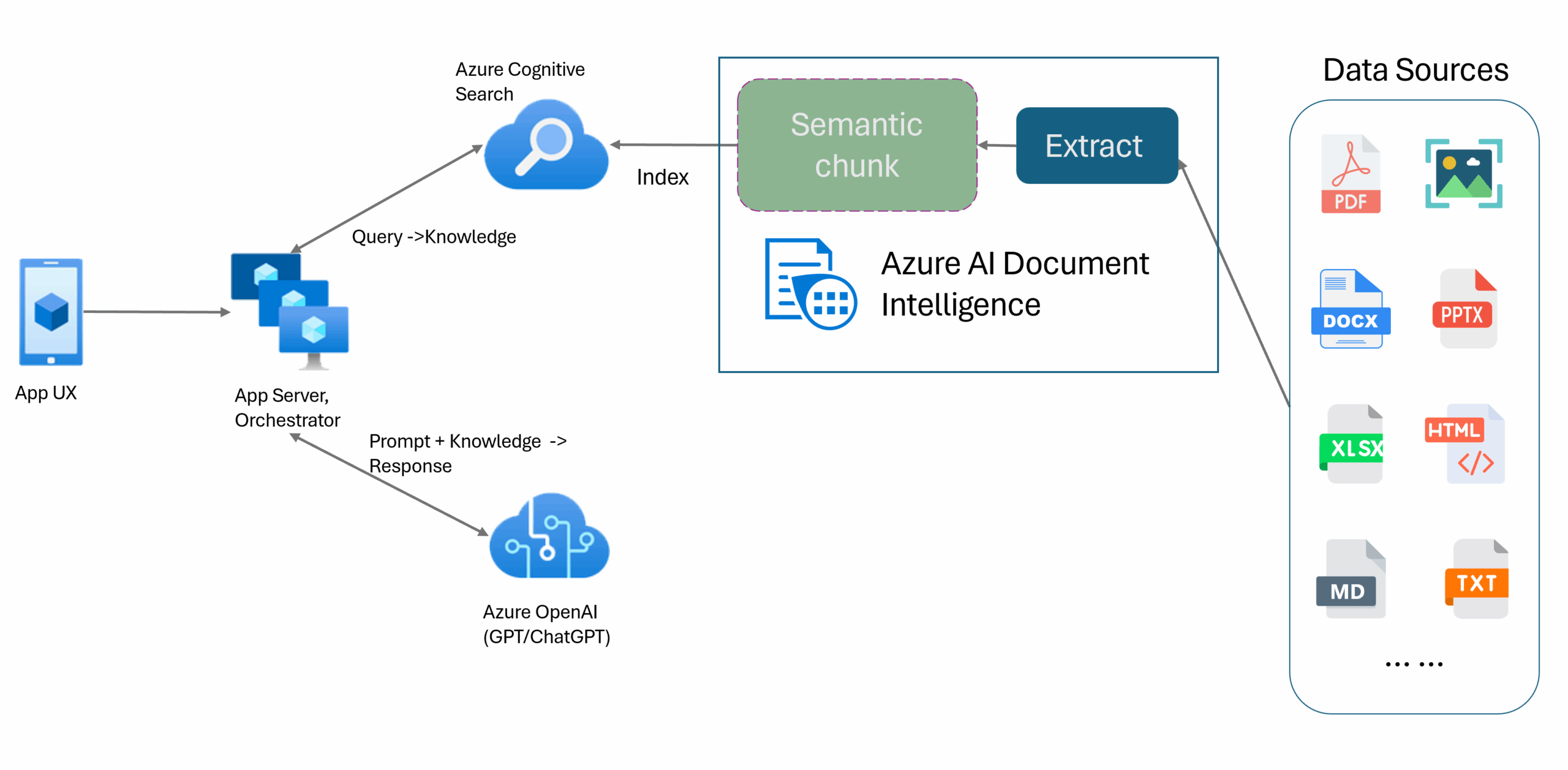

“Clearly, fine-tuning is a complex and equally expensive operation. That is why a valid alternative solution is what is called RAG, or Retrieval Augmented Generation,” Mario continues. Essentially, by using an additional search engine, it becomes possible to collect and act on a much larger collection of data. When input is provided to the Gen AI, more context can be added. “For example, if I want to know what the most significant movements were in my warehouse over the last six months, I can leverage a search engine starting from a collection of data I already own and that is indexed, and then provide this specific context window to the Generative AI, which then has all the data necessary to answer my question.”

In short, while fine-tuning implies additional training of the model on a specific dataset, the RAG approach is much more flexible and adaptable. It involves providing more context to the system during the input phase without the need to proceed with new training.

The Stesi experience

Stesi knows a thing or two about solutions of this second type, thanks to the collaboration with the students of the Flaminio high school in Vittorio Veneto, which led to an interesting project featuring Artificial Intelligence. “During the project, a chatbot called Stesi GPT was built, which utilized OpenAI’s GPT model while leveraging Azure to improve the performance and quality of the data extracted from the collection,” Mario says.

“The project uses Cognitive Search, a search tool, to index and retrieve data which, combined with the initial prompt, allows Stesi GPT to provide targeted answers to the questions of warehouse operators.” The interesting aspect is that, currently, the operator is provided with a text response because the project is unimodal, but nothing prevents the envisioning of future scenarios where the operator could be provided with images, videos, or even sounds.

This project represents a concrete example of how Generative AI can be verticalized and optimized for specific applications, improving efficiency and precision in logistics operations.

Want to delve deeper into the topic or learn more about silwa, Stesi’s proprietary WMS? Contact us to find out more.